You've spent weeks gathering data and a small fortune on annotation services, but your model's accuracy is hitting a ceiling. You've tweaked the hyperparameters and tried a different architecture, yet nothing budges. The culprit is likely hiding in plain sight: labeling errors. Even in a gold-standard dataset like ImageNet, about 5.8% of the labels are wrong. When your ground truth is lying to your model, no amount of complex math can fix it.

If you're managing a machine learning project, you need to stop treating your labels as absolute truth. Labeling errors aren't just "typos"; they are systematic failures that can degrade your model's performance more than a poor choice of neural network. To fix this, you have to move toward a data-centric AI approach, where you spend as much time auditing your data as you do tuning your code.

Common Patterns of Labeling Errors

Before you can fix the data, you need to know what you're looking for. Errors don't usually look random; they follow specific patterns depending on the task.

In computer vision, you'll often see "missing labels," which account for about 32% of errors in object detection. Imagine a self-driving car dataset where a pedestrian is simply not boxed-that's a critical failure. Then there's the "incorrect fit," where a bounding box is slightly off, making the model struggle to find the exact edges of an object. If you change your taxonomy halfway through a project without a version control system, you'll run into "midstream tag additions," creating a messy hybrid of old and new labels.

Text classification has its own set of headaches. You'll find "out-of-distribution" examples-data points that don't actually fit into any of your predefined categories-and "ambiguous examples," where a piece of text could reasonably belong to two different classes. In entity recognition, the struggle is often with boundaries. According to research from MIT's Data-Centric AI Center, 41% of errors in this area involve incorrect entity boundaries-like highlighting only half of a person's name.

Why does this happen? Usually, it's not because the annotators are lazy. About 68% of these mistakes stem from ambiguous labeling instructions. If your guidelines are vague, your data will be inconsistent.

How to Spot Labeling Errors Algorithmically

You can't manually check ten thousand images; you'll go crazy. Instead, use an algorithmic approach to flag the most suspicious labels for human review.

One of the most effective methods is confident learning is a framework used to estimate the joint distribution of label noise by analyzing the discrepancies between predicted probabilities and given labels. Tools like cleanlab is an open-source Python framework that implements confident learning to identify label errors in datasets use this to find "noisy" labels. By comparing what the model is very confident about versus what the label says, you can find errors with 65-82% precision.

Another way is model-assisted validation. If you have a model with at least 75% baseline accuracy, you can run it against your annotated data. When the model predicts a class with high confidence but the label says otherwise, you've found a potential error. This method can catch up to 85% of mistakes, especially in high-stakes fields like medical imaging where the error rates are typically 38% higher than in general datasets.

| Method | Primary Tool | Best For | Pros | Cons |

|---|---|---|---|---|

| Confident Learning | cleanlab | Classification | High statistical rigor | Requires Python expertise |

| Multi-Annotator Consensus | Label Studio | High-stakes data | Reduces errors by 63% | 3x higher cost |

| Model-Assisted Validation | Encord Active | Computer Vision | Visualizes errors quickly | Needs high RAM (16GB+) |

The Process of Asking for Corrections

Once you've flagged a thousand potential errors, you can't just tell your annotation team "this is all wrong." You need a structured feedback loop to ensure the corrections actually improve the dataset.

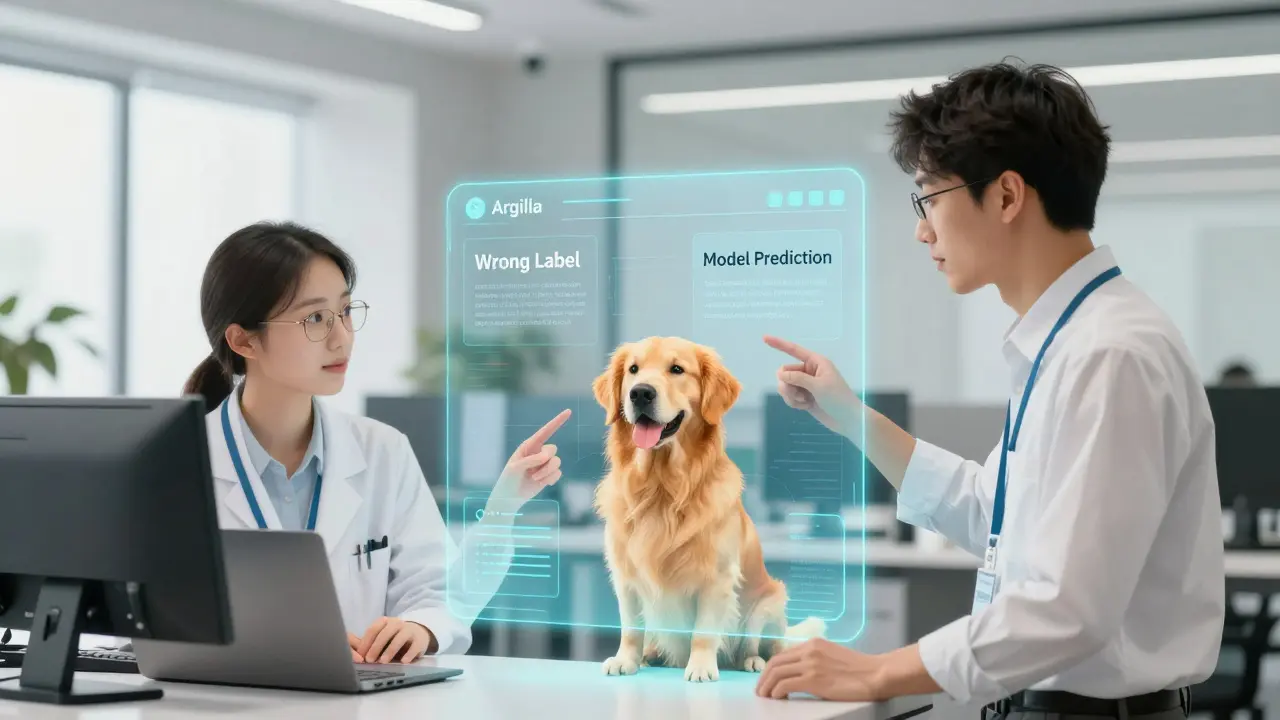

Start by loading your flagged examples into a review interface. Argilla is an open-source data curation platform that allows ML engineers to visualize and correct label errors through a web UI is great for this because it integrates directly with Hugging Face models. This allows you to present the "wrong" label alongside the model's prediction, making it easier for a human to decide who is right.

When asking for corrections, provide the context. Instead of saying "Fix image #402," say "The model is 98% sure this is a 'Golden Retriever,' but it's labeled as 'Labrador.' Please verify." This reduces the cognitive load on the annotator and speeds up the process.

If you're using a professional service, implement a consensus workflow. Have a second or third annotator review the flagged error. While this adds about 30-60 minutes per sample, it boosts correction accuracy from 65% to nearly 89%. If you find that a specific type of error is happening repeatedly, don't just fix the label-update your labeling instructions. A simple addition of an example image can reduce errors by 47%.

Choosing the Right Tool for Your Workflow

Not all error detection tools are created equal. Your choice depends on your technical skills and the type of data you have.

If you're a Python developer who loves statistics, cleanlab is the gold standard. It's the most widely used among ML engineers (about 42% market share) because of its mathematical foundation. However, be warned: it has a steep learning curve. Most analysts need about 8 hours of training just to get started.

For enterprise teams who want a "one-click" experience, Datasaur is an enterprise data annotation platform featuring automated label error detection for tabular and text data is a better bet. It integrates the detection directly into the annotation platform, which removes the friction of moving data between different tools. Just keep in mind that it currently lacks support for object detection tasks.

If you're working with heavy images and need a visual interface, Encord Active is the way to go. It specializes in computer vision and helps you see exactly where bounding boxes are failing. The tradeoff is the hardware requirement; you'll need a beefy machine with at least 16GB of RAM to handle large datasets.

Pitfalls to Avoid When Cleaning Data

It's tempting to let an algorithm do all the work, but that's a dangerous game. Over-reliance on automated detection can create a "feedback loop" of errors. If your algorithm systematically misidentifies a rare minority class as an error, you might accidentally erase the very diversity your model needs to be fair and accurate.

Another common mistake is ignoring version control. When you update a label based on a correction, you must document why it was changed. Without an audit trail, you won't be able to perform a root cause analysis to see if your annotators are consistently misunderstanding a specific rule. If you don't track these changes, you're just playing whack-a-mole with your data.

Lastly, don't try to fix everything at once. Correcting just 5% of label errors in a dataset like CIFAR-10 has been shown to improve test accuracy by 1.8%. Focus on the high-confidence errors first. Trying to reach 0% error is a waste of resources; aim for a "clean enough" dataset that allows the model to converge without being misled by noise.

How often should I check for labeling errors?

You should perform a label audit at three key stages: immediately after the first batch of annotations to refine guidelines, after the full dataset is labeled, and whenever you notice a significant plateau in model performance. In a typical MLOps pipeline, this is a recurring step in the data curation phase.

Can I use the same model to detect errors in its own training data?

Yes, but with caution. This is essentially what model-assisted validation does. However, the model might simply be "overfitting" to the errors. To mitigate this, use techniques like cross-validation or a separate "golden set" of manually verified data to ensure the model isn't just echoing the noise.

What is the most common cause of labeling errors in text datasets?

The most common causes are ambiguous instructions and overlapping class definitions. When two categories (e.g., "Positive" and "Very Positive") aren't clearly distinguished in the guidelines, annotators will disagree, leading to high noise levels in the data.

Is it better to delete noisy labels or correct them?

If you have plenty of data, deleting (or ignoring) high-noise examples can be faster and safer. However, if you're working with a small dataset or rare classes, correcting them is essential. Correcting labels preserves the signal that the model needs to learn a specific class.

Do labeling errors affect all ML models equally?

No. Simpler models can sometimes be more robust to noise, while very complex, deep neural networks can accidentally "memorize" the errors (overfitting), which leads to poor generalization on real-world data. This is why data-centric AI is so critical for large-scale models.

Next Steps for Data Quality

If you're just starting, don't buy a fancy tool yet. Start by creating a "Golden Dataset"-a small set of 100-500 samples that are verified by multiple experts. Use this as your benchmark to test how accurate your current annotators are.

For those in regulated industries like healthcare, follow the FDA's 2023 guidance. You'll need a rigorous validation plan that documents every labeling error found and how it was remediated. This isn't just about accuracy; it's about compliance and safety.

Finally, if you're dealing with multimodal data (images and text together), be extra careful. Current detection methods only reach about 52% precision on multimodal errors. You'll need a much heavier human-in-the-loop presence for these projects than you would for simple image classification.